Providing New Evidence: Using AI in Merger Proceedings

Share

Written by Enrico Alemani and Jaime Coronado

Natural language processing (“NLP”) is a branch of Artificial Intelligence (“AI”) focused on how computers can understand language (e.g. written text) in much the same way human beings do. In this article, the data science team explores how NLP can be a new helpful tool in merger proceedings, including an application to a recent merger case.

Introduction

In simple terms, Artificial Intelligence (“AI”)[1] is a field of computer science that uses large, rich datasets to enable problem-solving. It also includes sub-fields like machine learning and deep learning, which make up most of the AI surrounding us today: machines learning how to perform a specific task.

Lately, AI has been getting a lot of attention, and for good reasons: recent developments have led to results that have changed our day-to-day life. We ask questions to Siri or Alexa as we do with friends and family. We watch shows based on Netflix’s recommendations, and scroll through news feeds, videos and photos selected by algorithms.

AI is not limited to our day-to-day personal life though – algorithms are also coming into merger proceedings, bringing a new set of analytical tools.

Potential merger effects: data and analysis

Regulators worldwide seek to collect relevant information to assess the possible effects of mergers. This information is usually gathered in time-intensive procedures where the merging firms, as well as third parties (especially competitors) are asked to provide opinions and data in response to questionnaires. Given the tight deadlines, the burden that this process can create for businesses, and the impossibility of contacting all the available third parties, the collected information might not always reflect all the views of the market.

Information requests, however, are not the only sources of evidence available in merger proceedings. There is a substantial amount of information about companies and industries, including trends and business strategies, publicly available from established sources. For instance, the specialised press or social media posts offer rich content to review market data, understand possible competition concerns, and consider theories of harm that could arise from a merger situation.

Analysing information from the specialised press may allow investigation of the broader picture, including identifying the author of relevant statements and the nature of their relationships with the merging parties. Examples include market competitors whose business models may depend (positively or negatively) on the merger being cleared or blocked, customers who expect lower costs or higher prices post-merger, or independent market commentators whose interest is to keep their readers informed.

Overall, a factual analysis of specialised press statements can approximate the market’s perspective and complement third parties’ views of a given market. However, a manual review of hundreds of press articles can be daunting and prone to subjective judgment. Here is where AI solutions can make a difference.

How machines analyse information

Machine learning is a branch of AI which consists of models trained to perform a given task without being explicitly programmed. Instead, they recognize patterns in the data and make predictions based on statistics, which is the driving force behind machine learning.

Deep learning can be considered a more sophisticated sub-set of the machine learning field, whereby models analyse data with a process inspired by the design and activity of the human brain. Deep learning algorithms use a layered structure, called a network, made of neurons and connections, to build knowledge[2] from the data. The larger the number of layers, the deeper the network.

There exist several real-world deep learning applications currently in use, such as image classification. Figure 2 shows how a neural network classifies pictures of transport vehicles. The deep neural network takes a user’s input image, e.g., a car, squishing it through its layered neurons, which store the model’s knowledge (i.e., the learned features) to predict that the input corresponds to a car with 95% confidence.

Figure 2: Example of image classification

| Source: MathWorks, see https://www.mathworks.com/discovery/convolutional-neural-network-matlab.html. |

This technique is not confined to images but extends to other data types, such as written text. Natural Language Processing (NLP) is a branch of AI focused on how machines can understand language (e.g., written text) like humans do. A common NLP approach is to train models to classify whether a piece of content carries a positive or negative sentiment. Like image classification, the NLP model will assign a positive or negative label to each sentence according to its current “understanding” of the language. This classification task is commonly known as ‘sentiment analysis’.

Natural Language Processing and mergers proceedings

Deep learning models need to be trained on large amounts of text taken from diverse sources (online reviews, tweets, newspapers, blog posts, etc.)[3]. For sentiment analysis, variety and abundance of text examples are crucial for the model to be accurate, including how context and linguistic nuances could turn the use of positive words into a negative classification.[4] This means that case teams will need to collect data and train models, which can take a considerable amount of time and computing power. And not all teams have the luxury to do so.

This is where the Large Language Model (“LLM”) comes into play. LLM is a deep learning system already pre-trained with a large amount of text. It is a general-purpose model that can perform well on various NLP tasks, including text classification and sentiment analysis. Crucially, many LLMs are publicly available, and they are used by the biggest technology companies in the world.[5]

This is a game-changer. It makes it possible for teams who otherwise wouldn’t have the resources or expertise to use deep learning. For merger proceedings, the main advantages of using LLM for sentiment analysis are:

- the speed and relatively low resource requirements allow case teams to form a view of competition in the relevant markets at the early stages of investigation;

- the analysis and the results are replicable by third parties and regulators;

- the possibility for the case team to examine the model’s output; and

- most importantly, being trained on a vast amount of text which is not strictly related to competition and consumer issues means that the model can classify text as if it were an independent decision-maker acting in a non-biased way.

In that sense, the model's input (i.e., text) provides an informed view of the markets and the merger's potential effects. The model's output helps create a common ground for discussing evidence among merging parties and regulators.

Application to a merger case

Compass Lexecon prepared a text classification analysis in the recent merger investigation of the proposed acquisition of Arm by NVIDIA.[6] The European Commission identified several markets where a further in-depth investigation was needed, including the market for central processing units (“CPUs”) used in datacentres.[7]

There are two main platforms in this key market. The first platform is called instruction set architectures (“ISA”) with Arm’s ISA having less than 5% market share. The second platform is the x86 ISA found in Intel and AMD’s CPUs with around 95% market share in the second quarter of 2021.[8]

The text classification analysis helped us assess whether, in the view of market participants and specialised press, the proposed merger would increase the probability of success of the Arm datacentre CPU platform compared to its competitor’s, the x86. A higher likelihood of success of the Arm platform would likely benefit consumers by enhancing competition post-merger compared to the counterfactual situation absent the merger.

To test this hypothesis, we searched the web to identify news articles published by the major specialised press (e.g., Forbes, techrepublic.com) discussing the likelihood of Arm’s success in the datacentre market from 2017 to 2021. Overall, we collected 61 news articles and identified 276 relevant quotes discussing the chance of success of the Arm platform. We then classified them based on whether they addressed a situation (i) absent the transaction or (ii) post-transaction.

For this task, we chose a deep learning model developed by Meta called RoBERTa. At the time, this model achieved state-of-the-art results on a range of NLP benchmarks used to evaluate a model’s language understanding, which is crucial for our task.

RoBERTa is a pre-trained model (i.e., an LLM) trained on a large body of text data and fine-tuned on datasets from different sources, including Wikipedia, books and news articles. In simple terms, RoBERTa learned to predict sentiment in two steps. First, the model developed an understanding of the English language by reading text and predicting missing words within long sequences of terms. Then, the model was given examples of content with positive and negative sentiment.

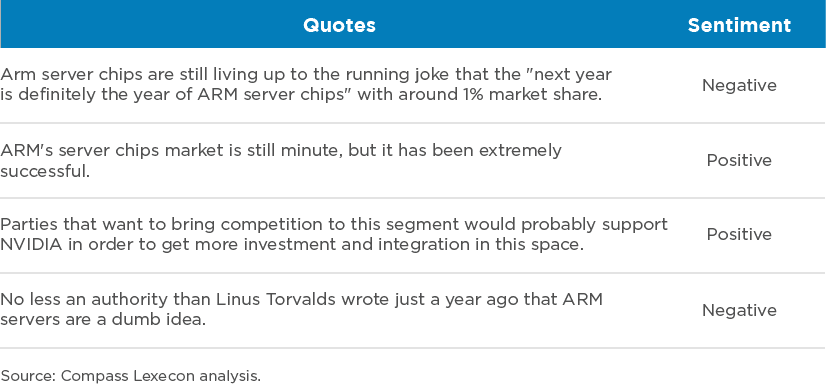

Using RoBERTa, we systematically classified all 276 relevant quotes.[9] The results show that the model “understands” how positive and negative words fit into context while adapting to different linguistic styles. This is one of the added values of deep learning compared to more classical NLP approaches, such as a simple keyword search of positive or negative words within the content. Table 1 shows some examples taken from the model’s output.

Table 1: Examples of positive and negative sentiment quotes.

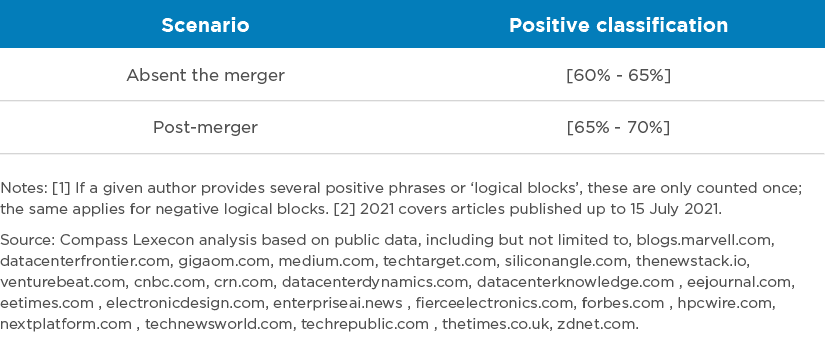

After the model classified each quote, we analysed the average perception of the market by merger scenario. That is, we assessed how specialised press’ perception changed when referring to a post-merger situation, compared to a situation absent the merger. Table 2 below presents a non-confidential summary of our results for the 2020-2021 period.

Table 2: Impact of the transaction on the market perception about the chance of success of a platform, 2020-2021

Our analysis suggests the share of positive perception in the market is up to 10 percentage points higher when referring to the post-transaction scenario. This can be interpreted as the market perceiving that Arm would have better chances of succeeding with the merger than without it. These results provide important insights and additional evidence to the merger investigation that would be difficult to obtain otherwise.

Conclusion

NLP is a powerful and exciting area of data science that provides new analytical methods to generate evidence from textual sources that would otherwise be too costly or time-consuming to analyse. While it is a new technique and must be implemented accurately, when used correctly, it can provide a highly effective tool for revealing an accurate picture of competition in an industry.

Fancy reading more on NLP? Remember to bookmark our article in the Analysis, ‘Using Natural Language Processing in competition cases,’ for a general discussion of the use of NLP in competition policy.

About the Data Science Team

The Compass Lexecon Data Science team was created to bring the latest developments in programming, machine learning and data analysis to economic consulting.

Sometimes this involves applying novel techniques to assess specific questions in an innovative and compelling way. For instance, running a sentiment analysis on social media content related to merging firms can be informative on their closeness of competition, and can supplement the results of a survey.

Other times it is about making work faster, more accurate, and more efficient, especially on cases which involve large datasets.

This short article is part of a series of articles showcasing how data science can lead to more streamlined and robust economic analysis and ultimately to better decisions in competition cases. If you would like to find out more, please do not hesitate to contact us at datascience@compasslexecon.com.

[1]In his 2004 paper, the computer scientist John McCarthy defined artificial intelligence as “[…] the science and engineering of making intelligent machines, especially intelligent computer programs. It is related to the similar task of using computers to understand human intelligence, but AI does not have to confine itself to methods that are biologically observable.” Available at: http://jmc.stanford.edu/articles/whatisai.html (accessed on 17 June 2022).

[2] In machine learning jargon this is also called ‘learn features’.

[3] An AI model is said to be trained based on data. That is, the model has learned to categorise text based on many text examples. This helps the model to provide the likelihood a new phrase is allocated to a particular category. A phrase is then allocated to the class having the highest likelihood of belonging.

[4] We decided to use deep learning models for this analysis after comparing the performance of different classical machine learning techniques, moving from more accessible approaches to more sophisticated techniques like deep learning models. Deep learning proved to have the best performance for our task.

[5] An example is Google’s search engine, which relies on deep learning to retrieve search results for its users (see https://blog.google/products/search/search-language-understanding-bert/).

[6] NVIDIA, NVIDIA to Acquire Arm for $40 Billion, Creating World’s Premier Computing Company for the Age of AI, https://nvidianews.nvidia.com/news/nvidia-to-acquire-arm-for-40-billion-creating-worlds-premier-computing-company-for-the-age-of-ai.

[7] European Commission, Mergers: Commission opens in-depth investigation into proposed acquisition of Arm by NVIDIA, https://ec.europa.eu/commission/presscorner/detail/en/ip_21_5624.

[8] OMDIA, A historic data center quarter with over 15% of servers running on AMD, https://omdia.tech.informa.com/blogs/2021/a-historic-data-center-quarter-with-over-15-of-servers-running-on-amd.

[9] To avoid double counting, for statistical purposes, we consider one count per author. If an author makes a positive and a negative quote, we count this author once in both positive and negative categories.